SummaryThe NIST AI Risk Management Framework is a voluntary guidance document that helps organizations manage the risks of artificial intelligence systems. It provides a structured, flexible approach for incorporating trustworthiness into how AI is designed, developed, deployed, and evaluated. Organizations that adopt the framework can build greater confidence in their AI systems, reduce the likelihood of harmful outcomes, and demonstrate accountability to customers and regulators. As AI becomes more embedded in business operations, the AI RMF offers a common language and practical structure for responsible governance across industries. |

Artificial intelligence can process information and generate outputs at a scale and speed that traditional software cannot. However, many AI systems operate in ways that are difficult for people to interpret. This introduces a key operational challenge: understanding how AI systems generate results and influence business decisions.

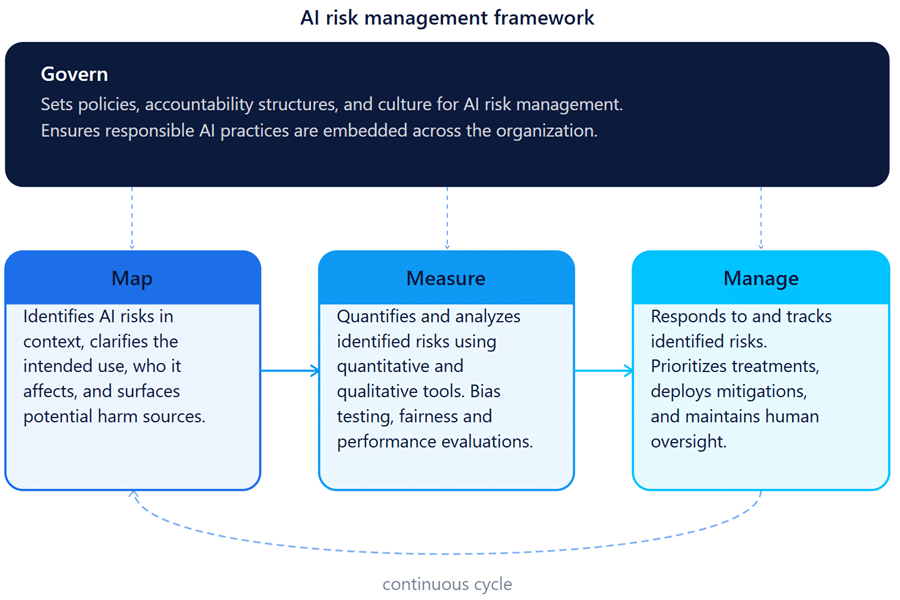

The NIST AI RMF addresses this through four core functions: Govern, Map, Measure, and Manage, which guide organizations through identifying, analyzing, and responding to AI risks throughout the full system lifecycle. Developed over 18 months with input from more than 240 organizations, it is designed to adapt as AI technology evolves, and is complemented by a practical Playbook, Roadmap, Crosswalks, and a dedicated Generative AI Profile released in 2024.

What Is the NIST AI Risk Management Framework?

Released on January 26, 2023, the AI RMF is the product of an 18-month collaborative effort between NIST and hundreds of public and private stakeholders. Its core goal: give organizations a practical, flexible, non-prescriptive tool for navigating the complex risks AI systems introduce.

Unlike a compliance checklist or a regulatory mandate, the AI RMF is designed to be voluntary, rights-preserving, non-sector-specific, and use-case agnostic. A hospital, a bank, a startup, and a federal agency can all use it, and thus it is able to adapt as many or as few elements as fit their context.

Importantly, the framework is intended to be a living document. NIST has committed to reviewing and updating it regularly as AI technology evolves, with a formal community review expected no later than 2028.

Why It Matters for Organizations?

AI systems can fail in ways that are hard to predict and harder to undo. The AI RMF exists because the stakes are high and because most organizations don’t have a ready-made playbook for managing these risks.

The framework addresses two categories of audience:

Primary audience: Organizations and teams that actively manage the design, development, deployment, evaluation, and use of AI systems, developers, product managers, risk officers, and procurement teams.

Secondary audience: Entities that inform or shape AI practices without building systems directly such as trade associations, standards bodies, researchers, advocacy groups, and policymakers.

For businesses, the practical benefits are substantial. The AI RMF helps organizations build and maintain trust with customers and regulators, integrate responsible practices before problems emerge (rather than scrambling after), and create internal accountability structures that survive staff turnover or leadership change.

How the Framework was Built?

In July 2021, NIST issued a federal Request for Information, launching the first formal public input phase and inviting responses from organizations across all sectors. That October, the first of multiple public workshops gathered perspectives from companies, government agencies, academia, and civil society. By December 2021, NIST had published an initial Concept Paper for public review, inviting early feedback on the framework’s structure and scope.

A first complete draft followed in March 2022, released for written public comment alongside a second workshop. A revised second draft was issued in October 2022, incorporating feedback from hundreds of commenters, with a third public workshop held to refine the final document. On January 26, 2023, NIST officially published AI RMF 1.0, along with a companion Playbook, Roadmap, Crosswalks, and Perspectives documents. In July 2024, the framework was extended further with the release of a dedicated Generative AI Profile (NIST-AI-600-1), addressing the unique risks posed by large language models and similar systems.

The result reflects approximately 400 sets of formal comments from more than 240 organizations

The Generative AI Profile

In July 2024, NIST released a significant extension to the core framework: NIST-AI-600-1, the Generative AI Profile. As large language models, image generators, and similar systems became ubiquitous in enterprise settings, NIST recognized that the original framework’s general guidance needed to be supplemented with direction tailored to generative AI’s specific risk profile.

The Generative AI Profile helps organizations identify the unique risks that generative AI introduces, including hallucinations, misinformation, intellectual property concerns, and misuse of synthetic media and proposes actions for managing those risks in a way that aligns with organizational goals and priorities.

For businesses that have already deployed large language models in customer-facing or internal workflows, the Generative AI Profile offers a structured lens for evaluating whether those deployments are appropriately governed and monitored.

Govern:

- Establishes organizational policies, accountability structures, and culture around AI risk management. It ensures responsible AI is embedded across the organization.

Map:

- Identifies and categorizes AI risks in context. Organizations clarify the intended use of an AI system, understand who it affects, and surface potential sources of harm before they manifest

Measure:

- Quantifies and analyzes identified risks using both quantitative and qualitative tools. This includes bias testing, performance evaluation, and fairness assessments across diverse demographic groups.

Manage:

- Responds to and tracks the risks that have been identified and measured. This includes prioritizing risk treatments, deploying mitigations, and maintaining ongoing human oversight for high-stakes decisions.

AI RFM Program

An AI RMF Profile is a customized application of the NIST AI Risk Management Framework tailored to a specific organization, sector, or use case. Rather than applying the framework’s four functions generically, a profile shapes those functions around the particular goals, risk tolerance, and resources of the organization using them. A profile for an AI system used in hiring, for example, would look different from one developed for a fair housing application or a healthcare diagnostic tool.

Organizations that develop profiles are better equipped to make risk management practical and relevant to their work. Instead of working from abstract principles alone, they can use profiles to document where they currently stand and where they need to be which makes it easier to identify gaps, allocate resources, and demonstrate accountability to regulators, customers, and affected communities. Profiles also allow organizations to benchmark their approach against others operating in the same sector or using similar technologies.

The framework distinguishes between two types of temporal profiles. A current profile describes how an organization is managing AI risk today, capturing the risks and outcomes associated with its existing systems and practices. A target profile describes the desired future state and the outcomes an organization is working toward based on its risk management goals and priorities. The gap between these two profiles reveals what needs to change, and that gap analysis becomes the basis for a structured action plan.

Profiles are also designed to work across sectors. Cross-sectoral profiles address risks that arise from models or applications used in multiple industries such as large language models or cloud-based services, where the same underlying technology may present similar risks in very different organizational contexts. The framework does not prescribe a fixed profile template, which allows each organization to build a profile that genuinely reflects its situation rather than following a one-size-fits-all structure.